Introduction

This is the first part of a series about LLMs and how we use them at Quasar. This is a series because the marketing department told me it is better for SEO, engagement, or something, and who am I to contradict the marketing department?

A clarification: Quasar has no horse in this race.

Yes, Quasar is in the field of “AI”: we are an AI infrastructure company. We built a solution for large numerical data capture (for example: time-series), compression, storage, and restitution to enable forecasting and advanced analytics. Think: predictive maintenance, energy optimization, energy trade forecasting, etc.

However, we don’t sell or build LLMs or related tools; we do use LLMs daily for a wide range of tasks (marketing, security audits, software development, research, etc.), and we build large-scale data infrastructure that may eventually be fed into LLMs.

So why am I writing this? In approximately 100% of our meetings with clients (current or future), LLMs get mentioned. There’s so much hype and FUD out there, we collectively decided to summarize and publish our current understanding of the state of the art.

The other reason is that I care intensely about software and software quality, and we thought many of you would love some unbiased feedback. Also, I run this company, and I can do what I want, provided the marketing department allows it.

To clarify: This is not a Luddite post. I’m genuinely excited about recent advances in AI and very optimistic about future developments. Everyone at Quasar is actively using these new tools in one way or another. We have only scratched the surface!

In this first post, I’m going to do my best to explain how LLMs work, what they are, and what they are not. In the next posts, I will get into more detail about how we (Quasar) specifically use them, especially regarding software development.

Most modern large language models follow a design known as a GPT-style transformer, which generates text by predicting the next token in a sequence. In the rest of this post, when I refer to LLMs, I am mostly referring to systems built on this architecture.

Understanding what an LLM is and is not

When Deep Blue beat Kasparov in 1997, everyone thought that computers had become smarter than humans, because, yes, being good at chess requires a lot of cognitive power and long hours of practice. But it turns out, you can brute-force your way to being good at chess if you’re able to memorize every game played ever and can map every possible move. You cannot outsmart a computer at chess, but that doesn’t mean the computer is smart.

It’s important to have at least a superficial understanding of how your tool works to use it well. I’m no expert in the field, but I’ll do my best to explain, first with an analogy, then with a more formal description.

Spoiler alert: it’s going to break some of the “magic”.

LLM as an analogy

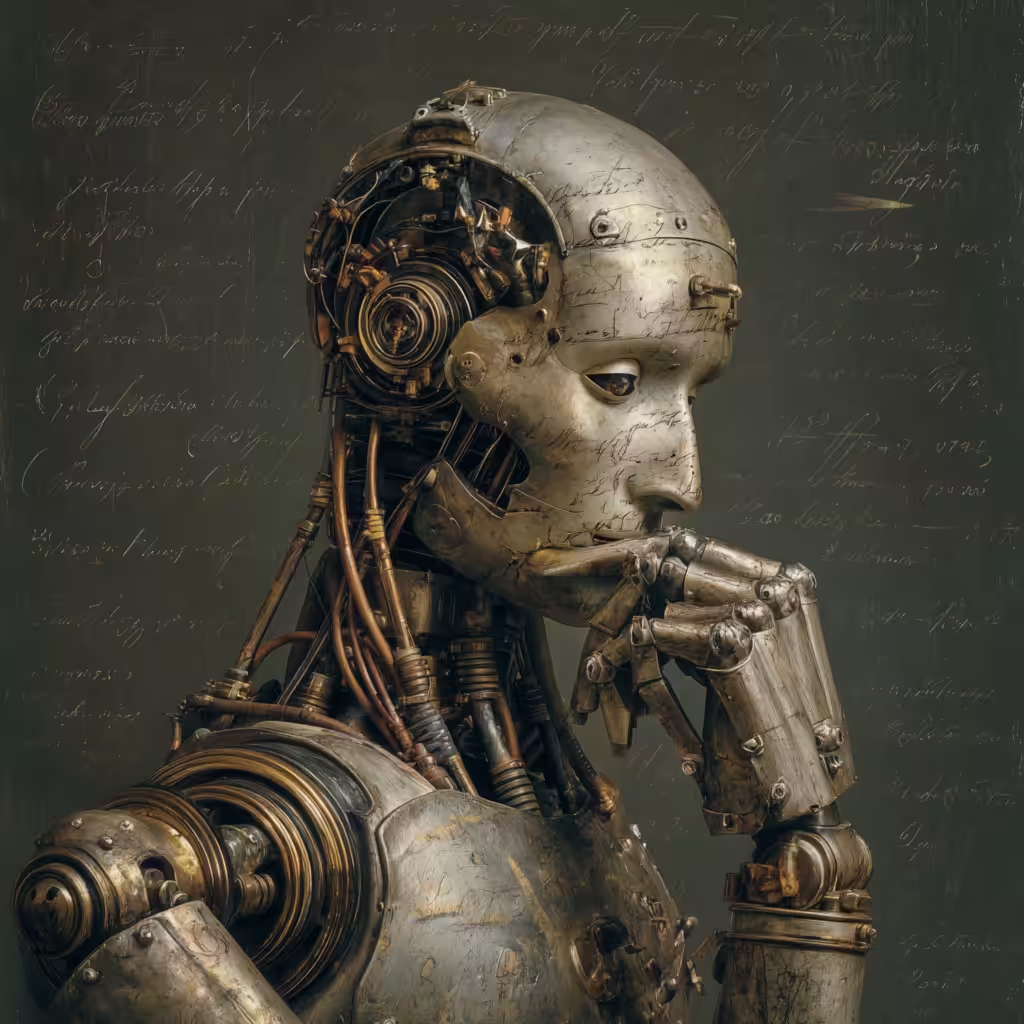

Leonardo is a master painter (and an inventor!), and he built a robot called “Plagiario”. Plagiario has perfect vision and has watched Leonardo paint every work from start to finish. Every color choice, every brush stroke, every correction. It has also studied the entire catalog of paintings ever created.

Over time, Plagiario becomes remarkably skilled at painting. If you ask for “a woman in a castle”, it can generate a composition that looks convincing: balanced colors, believable lighting, and elegant brushwork.

But something is often slightly wrong.

The castle windows may be oddly shaped. The perspective might feel subtly off. The woman may have six fingers. Or the structure may make no sense at all (why would the woman stand on the chair?).

Plagiario has never seen the real world. It has only observed paintings. From its point of view, a “woman” is simply a pattern that appears in art. It knows the visual statistics of paintings of women, but it does not know what a human actually is.

So Plagiario generates what appears to be a plausible painting of a woman in a castle, based on patterns it has observed. Most of the time, it gets it right. Sometimes it drifts into strange territory by extrapolating from patterns rather than understanding the world behind them.

LLMs are stuck in Plato’s cave.

Large language models work in a surprisingly similar way. They learn statistical patterns from vast amounts of text and then generate new text that follows those patterns.

LLMs: more precisely

An LLM models language as a position in a very high-dimensional space learned during training. A prompt is first broken into tokens (small pieces of text represented internally as integers). Each token is then mapped, through an embedding table, to a vector that gives its coordinates in that space. Tokens that tend to appear in similar contexts end up close together, allowing the model to capture relationships between linguistic elements.

For example, the tokens for cat and dog will end up close to each other in that space because they tend to appear in similar contexts (“the cat ran”, “the dog ran”, “feed the cat”, “feed the dog”). A word like engine will be much farther away because it appears in very different contexts.

The geometry of this space can also capture relationships between words; in early language models, it was observed that vector(“king”) – vector(“man”) = vector(“woman”) ~ vector(“queen”). These relationships are not programmed explicitly: they emerge from the statistical patterns the model learns during training.

Back to the vectors we built from our input text. They are only the starting point. The next step is the transformation phase: the model passes them through many layers of transformation. In each layer, each token looks at the other tokens in the prompt and decides how much to influence its representation, using a mechanism called attention. The result is that the vector for each token is repeatedly updated to incorporate information from the surrounding context.

For example, the word bank will start with the same initial vector whether it appears in “river bank” or “investment bank”. But as the model processes the sentence, attention causes the surrounding words to influence its representation, producing different vectors depending on the context.

After these transformations, the model has a vector representing the current context. This vector is then projected into a list of scores: one for every token in the vocabulary. After normalization, these scores become probabilities for the next token. The model selects one, appends it to the text, and repeats the process to generate the next word. Stopping happens either when it predicts a special end-of-sequence token or when the system generating the text reaches a predefined limit.

That’s all there is to it!

Yes. That’s it. Train on good data. Create a universe. Transform the input into coordinates in that universe. Iteratively adjust the coordinates to tighten the representation. When the vector is good enough, you have a context representation that summarizes the prompt. From that, you can compute probabilities for the next token.

But how could it be so smart? The following bet was made: “If we scale up the training model both in quality and size dramatically, the system will approximate intelligent discourse”, or, to quote Ken Thompson, “When in doubt, use brute force”.

We know GPT-2 was trained on 40 billion tokens and GPT-3 on 300 billion tokens. If you remember, GPT-3 was pretty impressive when it was released (2020). We are now at GPT-5 Tokyo Drift Edition Turbo Extended. Who knows how many tokens they used?

When you ask a question, the model transforms the text into an internal representation shaped by the patterns it learned during training. Based on that representation, it predicts what a plausible continuation of the text would be. Because it has been trained on vast amounts of language, that continuation often resembles a reasonable answer.

On top of that, chat applications wrap your input with hidden instructions (for example: “you are a helpful assistant”), retrieve context (for example, fetch the C++ standard to answer your C++ question), and add formatting instructions (for example: “be concise”).

This is how you get thoughtful replies to your prompt: a very large training set, and a structured prompt that includes instructions, context, and conversation history.

At no moment is there any actual thinking taking place. By that, I mean there is no underlying causal reasoning taking place. The system is generating the continuation of patterns it learned during training.

I know the hype is trying to sell you “AI is taking over”, but remember that many founders and investors have vested interests in making you believe that these tools are more than what they are.

Consequences for software engineering

The key difference between Plagiario and a human painter is that Plagiario has only seen paintings. A human painter has seen people, been inside buildings, and walked through landscapes. Paintings are just representations of those things.

Because of that, when an artist paints a woman, they are not reconstructing a pattern from previous paintings. The painter represents something they understand, not just visually, but through their place in the world, the emotions they convey, and the experiences associated with them.

Plagiario only knows the patterns that appear in paintings. Humans know the world those paintings represent and understand the system that produces them.

When it comes to programming, large language models operate almost entirely at the level of the representation. They are trained on vast amounts of source code, documentation, and discussions, and learn the statistical patterns that appear in those artifacts.

But they do not (and can’t !) build the underlying model of the system that produced the code: how the machine executes instructions, how the compiler transforms programs, or how the system behaves at runtime.

When a language model writes code, it generates something that resembles a valid solution. Most of the time, it works pretty well. Sometimes it produces things that cannot exist, such as imaginary SIMD instructions, because, statistically, they appear plausible even though they violate the system’s mechanics.

But most importantly, the model will output code based on the input, and in software engineering, the difficulty is rarely the act of writing code (although with C++, who knows?).

The hard part is building a correct mental model of the system you are working with: the machine, the compiler, the operating system, the data, the performance constraints, and the real-world problem you are trying to solve.

The code itself is only a series of instructions that, when executed by the computer, result in a solution to the problem.

But can’t we solve this by giving LLMs more input?

If you read my story about Plagiario again, you could say, “Well, maybe Leonardo can inject all the human experiences you described into Plagiario!”

The difference between a human mind and an LLM is not just about the inputs.

Yes, a GPT is a neural network, but it’s not playing in the same league as the human brain. It contains billions of parameters, but the structure itself is relatively simple and uniform.

The human brain contains roughly 86 billion neurons (you might have lost a couple reading this) connected by hundreds of trillions of synapses, organized into many specialized regions that perform different kinds of computation: perception, motor control, spatial reasoning, planning, memory, language, and many others. These systems interact continuously and operate across multiple time frames (from seconds to years).

Your brain is not a fixed network. Synapses strengthen or weaken as you learn, new connections are formed, and existing circuits are reorganized. This process, known as neural plasticity, happens continuously throughout life.

Language models do not work this way. Once training is complete, the network is essentially frozen. During normal use, the parameters do not change. The model applies the patterns it learned during training, but it does not update its internal structure as it interacts with the world.

“Ok, then we can just create a GPT that is continuously retrained!”

In principle, that sounds plausible, but there are two problems.

First, training a model like GPT involves adjusting billions of numerical parameters stored in floating-point matrices. Updating those parameters requires large-scale optimization across the entire network, which is computationally extremely expensive (think weeks, not minutes).

Secondly, and most importantly, the deeper difference lies in the system itself. In a language model, almost all the information the system possesses is encoded in its weight matrices. The model is essentially a very large numerical function. In the brain, by contrast, the synaptic connections represent only part of the information stored in the system. Neurons are complex electrochemical processes, synapses have multiple dynamic states, and learning involves biochemical mechanisms that operate across different time scales.

In other words, the LLM is an extraordinarily powerful tool, whereas the human mind is the complete workshop. Your tool may outperform the workshop for certain specific tasks, but it lacks the workshop’s flexibility: the ability to combine tools, adapt them, and add new ones as needed.

The important takeaway

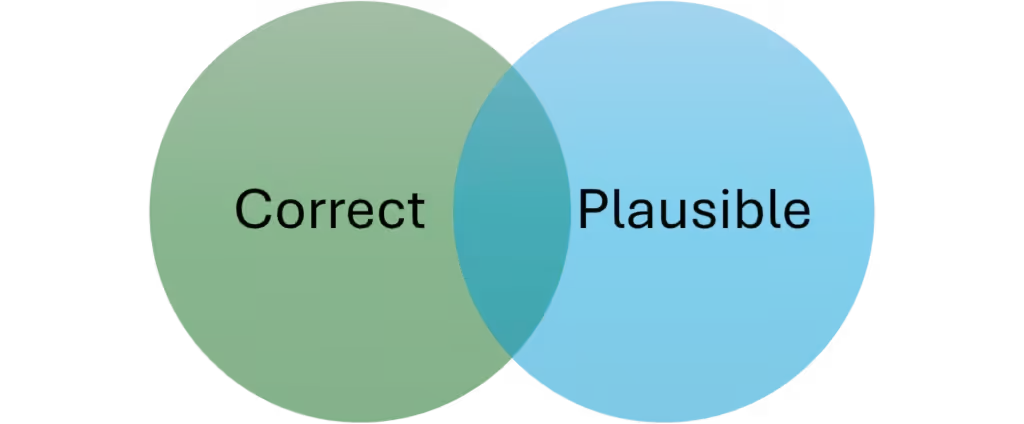

LLMs generate plausible solutions; users determine whether they are correct. There is an intersection between plausible and correct, and this is where LLMs deliver value.

For the nitpickers out there: yes, there are correct and implausible solutions. Examples: surprising behavior in a complex system, APIs used incorrectly in the wild, a quotation widely misattributed, emerging science, or what I had for dinner last night. Will be left as an exercise to the reader: how good at finding alpha is a system incapable of finding implausible outcomes?

An LLM does not search for the correct answer: it generates the continuation of text that best fits the patterns it learned during training. It doesn’t know what it is doing. And yet, despite this limitation, it is often surprisingly effective at giving good answers.

How? Why? Let’s take software development. The truth is that a large portion of routine programming work already consists of recombining known patterns: familiar APIs, common algorithms, and standard architectural structures. In that sense, many programmers already operate closer to pattern assembly than to deep system modeling. From a purely operational, and perhaps cynical, point of view, language models often perform this pattern-matching task faster.

So should we all (engineers, doctors, lawyers…) apply at McDonald’s (also $BTC hasn’t recovered either)? Yes, probably, but also, wait, no, hold on.

The scarce skill in engineering is not the ability to type correct syntax. The real skill is judgment: evaluating whether a solution is correct, appropriate for the system it runs in, and robust under real constraints.

I use the word judgment deliberately. This is not a matter of taste. It is the ability to determine whether the output is correct. Remember: I’m an engineer, I have no taste.

How can you judge? You need a mental model of the system. Without that model, you cannot reliably distinguish between code that merely looks plausible and code that works.

This is where language models become tools rather than replacements. Used well, they can accelerate development tremendously. Used poorly, they become a slot machine: repeatedly generating variations and hoping that one of them works.

The productive approach, which we will delve into further in future posts, is: shepherd the model through the valley of darkness, evaluate its output, and integrate it into a disciplined development process.

In the long run, this may reshape programming itself. Programming languages exist largely to remove ambiguity from human communication with machines. Natural language is far more expressive, but also far more ambiguous. Programming is the act of formalizing a solution without ambiguity.

Future programming systems will likely combine both: natural language to express intent and exploration, agents that work together to build the scaffolding, and formal languages to precisely specify the parts of the system where ambiguity cannot be tolerated.

Writing software is going to be faster and easier, and that means the number of people who can and will write software (directly or indirectly) is going to rise significantly. The paradox is known as the Jevons Paradox: increased efficiency of a resource leads to increased consumption of that resource.

Parting words

I’ll conclude this post with two thoughts. As we have seen, LLMs are crude compared to a human brain. To achieve almost parity in output quality, they have to put in several orders of magnitude more effort in time and energy. Still…

- They can deliver tremendous value as “crystalized intelligence” agents, and innovation won’t stop. Better hardware, better concepts: we’re just getting started.

- It’s not necessarily a useful endeavour to replicate human-like intelligence. We already have human-like intelligence. What we need are tools that are strong where we are weak.

I hope you enjoyed this post. In the next installment of this series, I’ll get into the guidelines and procedures we have at Quasar to maximize LLM productivity gains, especially in software development.